The algorithm isn't sexist.

Ada Lovelace, IBM punch cards, and the uncomfortable truth about algorithmic bias

Whatever We Know How to Order It to Perform

Lord Byron had expected (had demanded, really) a “glorious boy.” He was disappointed. In December 1815, in a London still lit by candles and warmed by coal fires, his wife Anne Isabella Milbanke gave birth to a baby girl. He named the child after his half-sister Augusta (but everyone called her “Ada”) and then, a month later, commanded his wife (and daughter) to leave. He never saw either of them again and died in Greece eight years later, fighting in someone else’s war.

Ada grew up knowing her father only through his scandalous reputation and a portrait she wasn’t permitted to see until her twentieth birthday. And as for Lady Byron, she did what anxious mothers have always done: she made a plan.

Ada would be educated in mathematics and logic, disciplines that Lady Byron apparently believed to be incompatible with her husband’s poetic madness.

She was frequently ill as a child. Measles paralysed her for nearly a year at thirteen. But she pursued her studies with a tenacity that suggests her father’s temperament had survived the mathematical curriculum intact.

But this was not a dreamy child. This was a systematic mind, already asking what machines could do.

At twelve, she decided she wanted to fly. Not metaphorically fly. Actually fly. She investigated materials for wings, examined the anatomy of birds to determine proper proportions, and even planned a book she would call Flyology. Her final step was to integrate steam power with “the art of flying.”

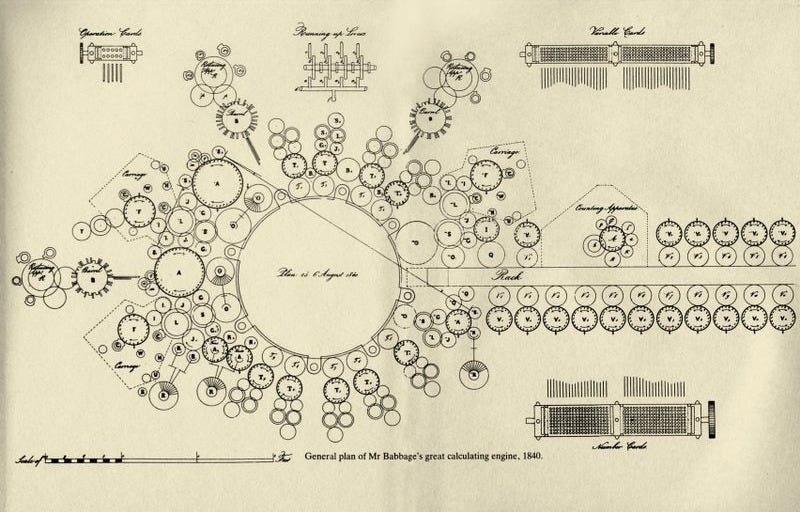

At eighteen, through her tutor Mary Somerville, Ada met Charles Babbage, a mathematician and inventor working on something called the Analytical Engine—a mechanical general-purpose computer that existed only in drawings of a contraption of brass gears and punch cards that would never be built (except for small trial parts) in Babbage’s lifetime. Most people didn’t understand it. But Ada did. She even called him out on a few errors. Babbage called her “The Enchantress of Number.”

When she was asked to translate a paper about the Analytical Engine from French to English, she did considerably more than translate. She augmented the paper with seven explanatory notes labelled A through G that were three times longer than the original article. These notes contained a detailed method for calculating Bernoulli numbers and are often called the first published computer program.

It should come as no surprise, therefore, that Lovelace saw further than Babbage himself. Where he imagined a sophisticated calculator, she imagined a more “poetical science,” a machine that could eventually manipulate symbols, compose music, and process any information expressible in logical relations.

Ada Lovelace, more or less, anticipated the modern computer by a century.

But she also anticipated its limits.

In her notes, Ada wrote:

“The Analytical Engine has no pretensions whatever to originate anything. It can do whatever we know how to order it to perform. It can follow analysis, but it has no power of anticipating any analytical relations or truths.”

Please note the phrase: whatever we know how to order it to perform.

I’ve been brooding about this sentence for quite some time now. Not just because Ada understood something about machines that we have largely chosen to forget, particularly now, when we speak of algorithms as if they were judges rather than mirrors, and of artificial intelligence as if it understands anything it is doing.

In past newsletters, I’ve reminded everyone that AI does not think or understand anything either. It merely executes instructions with perfect obedience and absolutely no comprehension of what those instructions mean. In this week’s newsletter, I want to discuss what this means for us.

Because had the world’s first computer programmer been a man, I have a feeling a lot more people would be talking about it, and Ada.

The Experiment Everyone Is Talking About

Like Ada, a few weeks ago, women on LinkedIn began conducting an experiment. They changed their profile gender from female to male—nothing else, just that single setting—and watched what happened.

The results were gobsmacking.

Lucy Ferguson, who changed her name from Lucy to Luke for 24 hours, reported that her content impressions increased by 818%.

Jessica Doyle Mekkes changed only her gender marker and saw her reach rise 700 percent. Rosie Taylor switched to male and watched her “people reached” stat climb 220 percent.

Cass Cooper tried the same experiment. Her visibility dropped. She attributed this to the intersection of gender and race; her profile was now registered as a Black man, and the algorithm, it seems, had opinions about that, too.

LinkedIn’s head of responsible AI, Sakshi Jain, responded with a blog post explaining that the platform’s “algorithm and AI systems do not use demographic information (such as age, race, or gender) as a signal to determine the visibility of content.”

This is almost certainly true. But it also rather misses the point.

The Relationship Between Demographics and Psychographics

The algorithm doesn’t need to know your gender to discriminate by gender. It needs only “whatever we know how to order it to perform”—that is, all it needs is what we have learned from historical data about which patterns correlate with “high-quality professional content,” and to reproduce those patterns with tireless efficiency.

In other words, it operates like a bloodhound trained exclusively on rabbit trails. Show it any path that smells familiar, and it follows with enthusiasm. Show it a different kind of trail (equally valid, leading to equally good quarry) and the dog just stands there, confused, waiting for a scent it recognises.

The bloodhound isn’t malicious. It isn’t even aware that rabbits exist. It just knows: this smells right, that doesn’t.

Researchers call this “proxy bias.” The algorithm doesn’t see gender—it sees career gaps, communication styles, topic choices, network patterns. Patterns that happen to correlate with gender in ways the system neither knows nor cares about. The outcome is discriminatory even though the intent is neutral.

But to blame the bloodhound seems beside the point. The dog doesn’t know what it’s doing. It simply follows the scent it was trained to follow.

So if the training data happens to reflect a world in which certain voices have been treated as authoritative, and others have been made more invisible, then the algorithm will faithfully (read: unfortunately) encode that world.

It works like a casting director who’s spent thirty years filling roles with a certain type. He’s not thinking about gender. He’s thinking: strong jaw, commanding voice, takes up space. He genuinely believes he’s casting for talent.

But to blame him seems beside the point. He doesn’t know what he’s doing. He just knows what a star looks like.

Why Someone Called Me Out

Which brings me to something uncomfortable.

Last week, I published an essay about LinkedIn’s algorithm. It was technical, reasonably thorough, and, by most measures, well-received. People shared it. People called it “definitive.”

And then Dr. Gillian Marcelle, a scholar of technology with forty years of intellectual training in exactly this and other spaces, wrote a LinkedIn post that stated the following:

“Your essay is being hailed as definitive and brilliant, precisely because you leave out the issues that make folks uncomfortable.”

And, while algorithm bias wasn’t the main point of my newsletter, she wasn’t wrong.

What Gillian was pointing out (and what Emily Goldenritt and Philip Mix elaborated on in the thread that followed) is something I had genuinely missed. Not because I’m dim-witted or wasn’t paying attention. But because the very thing I was writing about was actively working in my favour while I was writing about it.

The algorithm heard me. But not because what I said was more original, or more rigorous, or more true than what more qualified Black women scholars have been saying about algorithmic bias before. The algorithm heard me because of who I am and because of how the world perceives me.

My first reaction was to call this B.S. If anything caused my content to stand out over other people's, it’s because I’m better at creating content than they are. And according to my philosophy of these things, it means I am just better at showmanship and self-publicity. I then gave my typical advice on how to go about doing what I do. When Gillian replied that she typically gets advice from “men colored like me,” I was offended. “What the hell does that have to do with this discussion?”

Turns out it has everything to do with this discussion. And my initial reaction proved it.

I spent energy in that thread defending my identity (explaining that I identify as Jewish, not white) as if my internal sense of self somehow negated the structural advantage I receive from how systems perceive me.

As Emily put it plainly: “Whether you identify as white is not what gives you white privilege. It’s the world that perceives you as white, and rewards you accordingly.”

The algorithm doesn’t care how I identify. It cares how I’m perceived.

To be clear, I am referring only to my reposting of the newsletter on LinkedIn. The newsletter itself, being a newsletter that appears in people’s emails, is not subject to algorithmic bias. But it could have been subject to a different kind of bias. A worse kind…

The Ghost in the Training Data

Safiya Umoja Noble, a professor at UCLA whose work on algorithmic discrimination earned her a MacArthur Fellowship, has documented this with uncomfortable precision. In 2009, when she searched Google for “Black girls,” the first page of results was dominated by pornography. The same search for “white girls” produced radically different results.

Noble calls this “data discrimination.” She rejects (quite rightly, it seems to me) the idea that search engines are neutral. Like all things man-made, they encode the biases of the people who designed them and the societies that generated the training data. But I also think “data discrimination” is an unfortunate name that may lead people down the wrong path. Because some people may confuse data with the algorithm, and the sad but true fact is that, however discriminatory the data may be, the algorithm (or search engine) using it isn’t malicious here. It is being efficient. That is, it is surfacing content that matches the patterns in its training data, patterns shaped by a society that, in Noble’s example, has sexualised and dehumanised Black women for centuries.

Another study published in Nature recently examined nearly 1.4 million images and videos across Google, Wikipedia, IMDb, Flickr, and YouTube, along with nine major language models. The researchers found that women are consistently represented as younger than men across occupations and social roles, despite there being no actual age difference in the workforce. The gap was starkest for high-status, high-earning occupations.

Again, the machine didn’t invent this bias. The machine inherited it. And then, with the relentless efficiency of any advanced sorting system, it systematised and amplified it.

The Seduction of “Understanding”

This is where the language we use about artificial intelligence becomes actively dangerous.

When engineers or marketers say an algorithm “learns” or “understands,” we are speaking metaphorically. And to be fair, the people who use these terms also understand that they are metaphors. But these are metaphors doing more work than they should. Because what these engineers really mean when they say AI understands anything is that the system identifies statistical patterns in data and uses those patterns to make predictions. It has no theory of the world. It has no sense of meaning. It lacks the embodied experience of consciousness to do any of that. So when it processes words, it does not understand what those words refer to, any more than a pocket calculator understands what “seven” means when you press the button.

We say AI “thinks,” “decides,” and “understands.” It does none of these things. And the danger isn’t that the machine will develop intentions. The danger is that we’ll be so enamoured by its anthropomorphic mimicking capabilities that we will forget that the machine truly has no intentions at all, and therefore no capacity to notice when its outputs are causing harm.

But even more worrying is where we place the blame when we realise that it is. Because the intention does not belong to the machine. It belongs to us.

On the Similarities of Advertising and Algorithms

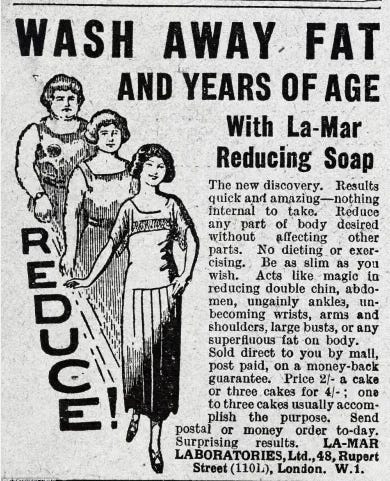

Anyone who has spent time studying how advertising actually works (as opposed to how critics imagine it works) knows a rather deflating truth: advertising is not particularly good at changing what people want. What it excels at (what the effective kind excels at, anyway) is channelling existing desires toward specific objects.

Consider a diet drug advertisement. The best ones don’t attempt the Herculean task of convincing you that you want to be thinner. You already want that. Society has spent decades establishing this particular conception of beauty, and the desire is well-entrenched before the advertisement ever arrives. The advertisement simply says: “Since you already want this, here is a thing that claims to help.” It takes the existing river of desire and diverts it slightly toward a particular product.

An advertisement that would say “You are beautiful exactly as you are” might be morally admirable. But it would also sell very little. Not because advertising people are cynical (though some are), but because they have learned, through expensive failure, that advertisements are mirrors, not motors. They reflect existing desires; they rarely create new ones.

This is why those who fear advertising’s power to reshape society are, for the most part, worrying about the wrong thing. And why those who hope advertising might reshape society for the better—making us more environmentally conscious, or racially equitable, or accepting of diverse bodies- are usually disappointed by the results. This is not to say that it can’t be done. It can. But it requires decades of sustained effort, institutional backing, and even then, typically works only by finding some existing desire to exploit. (Nestlé famously spent generations introducing coffee flavours to children through candies and cartoons, not to create a love of coffee from nothing, but to establish a nostalgic association that would pay dividends when those children became coffee-buying adults.)

The Same Thing Applies to AI and Algorithms

When LinkedIn’s algorithm promotes certain posts and demotes others, it isn’t making a judgment about quality. It’s matching patterns it has learned from billions of historical data interactions about which kinds of posts tend to generate engagement. And then it promotes posts that match those patterns.

The algorithm doesn’t know that those patterns may encode structural inequality. The algorithm doesn’t know anything. It has no power of anticipating any relations or truths. It is, as Ada understood, merely following analysis.

Now that I, thanks to people like Gillian, understand this, the question becomes: what can I do about it?

And here is where I may differ with some of the people who are marginalised (but have also thought longer and harder about this) by this.

Why “Fixing the Bias” Often Makes It Worse

The obvious solution is to fix it. Train the algorithm not to be biased.

The problem is that this assumes a level of understanding that doesn’t exist. Training a child not to be biased is one thing. Training a machine is rather like telling someone to stop thinking about elephants. You’ve now made elephants the centre of their attention.

The machine has one purpose: to show people the content they want to see. If people, whether through conscious or unconscious bias, tend to engage less with content from certain voices, the algorithm learns that pattern and serves it back, amplified and systematised.

And here’s the deeper problem: gender isn’t even in the data. It was never included. Yet the algorithm discriminates anyway—because it discovers proxies. Remove those proxies, and it finds others. Block language patterns, and it finds career gaps. Block career gaps, and it finds network structures. Block network structures, and it finds topic choices.

And because of this, debiasing an algorithm through programming or training wouldn’t work either. To “debias” an algorithm, you would have to teach it to identify and then compensate for protected characteristics, which means the system must become exquisitely sensitive to exactly the categories we claim to want it to ignore.

The only way to stop this would be to change the algorithm’s purpose entirely. But that would mean changing the business model of every social media platform, which is another way of saying: obliterating it.

In other words, the bias isn’t a bug in the system. The bias is the system—or rather, the bias is in us, and the system is an uncomfortably efficient mirror.

And the tool that surfaces the symptom is rarely the tool that cures the disease.

The Algorithm Isn’t Biased. We are.

This is why, as I argued earlier about advertising, the algorithm is not where this kind of systemic change should take place. And not just because algorithms are poorly suited to social engineering from a capitalist perspective. But because whenever you try to use algorithms to solve problems that show themselves in algorithms (or worse, use algorithms to fix algorithms), you tend to make the problem worse.

Which, with apologies to Godwin, brings me once again to my identifying not as white, but as a Jew and a descendant of Holocaust survivors in the comments section of Dr. Gillian Marcelle’s post. But this time, I bring up Nazi Germany not to defend myself against racism, but to warn about what happens when we try to use systematic tools to “solve” human categorisation problems. And to do so, I want to start, once again, with advertising.

The Punch Card and the Sorting Hat

Hitler established the Reich Ministry of Public Enlightenment and Propaganda immediately upon taking power, placing Joseph Goebbels in control of films, radio, newspapers, books, education, theatre, and art simultaneously. For twelve years, the German population was subjected to a comprehensive, coordinated, relentless messaging environment. Every medium spoke with one voice.

But it’s important to note that even this effort (with a Ministry of Propaganda, legal authority, a captive population, and total media control) was only effective where it could build on existing prejudices. A 2015 study published in PNAS found that Nazi indoctrination was “highly effective,” but specifically only where it could tap into preexisting antisemitic prejudices (which, tragically, were not difficult to activate). Goebbels himself understood this. And that is why he often argued that propaganda could only succeed if it was “broadly in line with preexisting notions and beliefs.”

Eric Hoffer, writing in 1951, also understood this principle with uncomfortable clarity. In The True Believer, his famous study of mass movements, the Longshoreman Philosopher observed that propaganda cannot force its way into unwilling minds, nor can it instil something wholly new. It penetrates only into minds already open, and rather than create opinion, it articulates and justifies opinions already present in the minds of its recipients.

In other words, even the Nazis, with resources and control that no commercial advertiser could dream of, were bound by the mirror problem. They rose to power by amplifying existing hatreds that they could then systematise and institutionalise. With enough people on their side, all they had to do was give social permission for what was already lurking in certain hearts. But they did not, even with all their power, create those hatreds from nothing.

All they needed next was a faster, better way to execute it.

The Punch Card and the Sorting Hat

In the 1930s and 1940s, the most sophisticated information technology in existence was the IBM punch card. Each card, about the size of a dollar bill, could store detailed information about anything, depending upon the arrangement of holes punched into rows and columns. The cards would be fed into high-speed readers, and out would come (for the first time in human history) not merely numbers, but information. So, for example, you could count not only how many people were in a room, but how many of them were men, how many women, how many of a particular religion, how many lived in a particular district, and how many worked in a particular trade.

This was marketed by IBM, quite reasonably, as efficiency.

Unfortunately, the Nazi regime thought so, too.

According to Edwin Black’s research, the Third Reich leased IBM’s Hollerith machines to help address what they considered a human logistical problem. So they used the machines to cross-tabulate religion, nationality, profession, and address. And now, at exactly twenty-five thousand cards per hour, they could identify exactly how many Berlin or Viennese furriers were Jews of Polish extraction.

The trains that delivered people to the camps ran on these punch cards. The camps even maintained Hollerith departments to track prisoners. Not that many people know this, but those numerical tattoos inked onto the forearms of prisoners at Auschwitz were originally IBM identification numbers designed for compatibility with those punch cards.

In other words, the Nazi propaganda apparatus wanted its computer system to achieve a specific task. They, in Ada’s words, knew how to order it to perform. And the machine (innocent of meaning and incapable of moral reasoning) executed those orders with perfect fidelity. This combination of propaganda and algorithm was perhaps the most systematic, well-funded, total-spectrum attempt to reshape not just human belief, but also human demographics in modern history.

If you are disgusted with the engineering-like language I am using to describe one of the worst mass murders in human history, then that is exactly the point.

As a Jew, it would be very easy for me to hate computers because of this. To see them as evil. To shout at the top of my lungs that this is what happens when you let computerised systems run social institutions. But the truth of the matter is, the machine did not hate anyone. The machine did not know what a Jew was, what Poland was, what death was. The machine merely sorted information and presented it in the way the human beings (in this case Nazis) wanted it to. And while I can point fingers at IBM for allowing their technology to be used for such purposes, I cannot blame the technology itself.

Algorithms, like advertisements (or propaganda), are mirrors with memory.

They reflect what already exists, but with perfect recall and relentless consistency. Unlike a human mirror, the algorithm never tires, never questions, never feels uncomfortable about what it’s reflecting. If it dehumanises, it does so simply because it inherited a world that had dehumanised races of people deemed impure by those who controlled it, and it did what efficient machines do. It sorted, categorised, and served up that inherited reality with frictionless speed.

The algorithm didn’t cause the bias. But it also didn’t resist it. And that, perhaps, is where the true danger lies. Because when a machine does something, we tend to assume—especially because of its efficiency, and especially those of us who are using it—that it must be objective. Or that whatever it says must be true.

If You Can’t Use the Algorithm to Change the Algorithm, What Can You Do?

If you want to change culture (actually change it, not just express good intentions about changing the way advertising and algorithms work), advertising and algorithms are probably the wrong tools to use.

This bias isn’t a problem that better engineering alone can (or, as the Nazis and their IBM punch cards prove, should) solve. The change has to happen elsewhere first. I have no idea where that is. In a country’s laws, perhaps. Or with new institutions. Or through education. All I know is that whatever the solution is, it won’t be fast. It will happen in the slow, difficult, unglamorous work that comes with the effort involved in shifting what people believe before the algorithms have anything different to reflect. And that is where I stop talking and start listening to people like Dr. Gillian Marcelle.

Reciprocity and Solidarity Are Verbs

This doesn’t, however, mean that you and I (as people who benefit from the bias latent in the technology) can’t do anything.

Dr. Marcelle said it beautifully in her post:

“Reciprocity and solidarity are verbs.”

Not nouns. Not feelings. Not things you believe in. Things you do.

It may be doing her an injustice, but it seems to me that what she was pointing out (with rather more patience than I deserved) is this:

If you benefit from a structural advantage (and I do, whether I identify with it or not), then in my case, reciprocity means using that advantage to amplify voices being made invisible. And solidarity means recognising the potential bias at play when your newsletter simplifying a problem gets called “definitive” while someone else’s forty years of expertise gets ignored—and asking why.

It means reading the scholars who have been doing this work longer and deeper: People like Safiya Noble’s Algorithms of Oppression, Ruha Benjamin’s Race After Technology, Meredith Broussard’s Artificial Unintelligence, and Emily M Bender and Alex Hanna’s The AI Con (as well as other fascinating perspectives from Dr Joy Buolamwini and Timnit Gebu).

It means understanding that when Gillian calls me out, she is doing me a favour, offering me a new perspective and education I couldn’t access on my own, because the bias was working in my favour.

Which brings me back to Ada.

What Ada Would Tell Us Now

Ada Lovelace died at thirty-six, the same age as her father, Lord Byron, whom she never knew. Despite his abandoning her at birth, she asked to be buried beside him. She left behind notes that anticipated the modern computer by a century, and a single observation about the limits of mechanical intelligence that we have largely chosen to forget—perhaps because she was a woman, perhaps not.

Ada’s approach was “poetical science.” The discipline of understanding how the machine works, combined with her father’s imagination, led her to ask more difficult questions. Questions not about whether it could work, but whether it should. She was, by all accounts, the world’s first computer programmer. And yet, her story remains an obscure footnote to Babbage and a symbol of a kind of hyperintelligence for those who know.

Which is another way of saying that this algorithmic bias has been going on for a long time. At least as long as the first algorithm was invented. But also, and most probably, longer.

But it’s not the technology that’s doing the discrimination. The responsibility for what gets sorted, what gets amplified, and what gets silenced belongs to us.

Gillian reminded me of this. Emily reminded me of this. Philip reminded me of this. They did the intellectual and emotional labour of educating me, of holding me accountable, and of inviting me to do better. And this admittedly somewhat obvious and shallow newsletter, starting with the story of Ada Lovelace and calling out the vital work of other women and marginalized voices (whether I agree with them or not), is my first attempt.

That’s what actual solidarity looks like. Not defending my identity. Not explaining away the bias. But seeing it clearly and acknowledging the possibility that the algorithm heard me because of who I am, not just because of what I said.

The fact that people liked what I had to say was a compliment.

The fact that it was easier for me to get attention for it has now become a responsibility.

One I intend to take seriously. Starting now.

Thank you, Dr Marcelle.

-Justin (adland’s newest philosopher) Oberman

P.S. I still believe publicity and showmanship matter for building a personal brand online. But I’m now wrestling with whether that advice means the same thing for everyone, and whether telling marginalised voices to “just be better at self-promotion” is asking them to work harder to overcome a system that was never designed to hear them in the first place. Next week I will address Dr. Gillian Marcelle’s critique of that.

Lots of great stuff in here, Justin. I am confused by one thing, however. If the algorithm doesn't include gender in its analysis, why did changing a marker from male to female have such a powerful effect? Presumably, all of the proxies you discussed were already being accounted for previously.

Good on you for realizing you benefited from the Algorithm, even if it was uncomfortable. We need more people doing this.

This was a great read Justin.